I am a first-year PhD student in a combined master’s–PhD program in Software Engineering at the University of Electronic Science and Technology of China (UESTC), under the supervision of Prof Fan Zhou. Previously, I received Bachelor of Engineering degree from Fuzhou University.

My research focuses on Robust & Personalized Multimodal Intelligence for non-ideal and dynamic real-world environments. I am enthusiastic about designing multimodal systems that can perform effectively under (1) imperfect inputs and environments (e.g., modality missing, distribution shifts) and (2) user-specific dynamics (e.g., MLLM personalization). I am also interested in multimodal video understanding and detection, where I am dedicated to improving the generalization and robustness of detection models in real-world scenarios.

Feel free to contact me if you have any questions about my research or potential collaboration opportunities.

🔥 News

- 2026.05: 💦💦 3 Paper are submitted to NeurIPS 2026. Hope a wonderful result.

- 2026.05: 🎉🎉 1 Paper is accepted by ICML 2026! See you in Seoul!

- 2026.04: 💦💦 We release the first comprehensive repository of resources on modality-missing learning at awesome-modality-missing-learning .

- 2026.04: 🎉🎉 1 Paper is accepted by ACL 2026 Findings!

- 2026.03: 💦💦 2 Papers are submitted to ECCV 2026! The Ship of Theseus now sails again.

- 2026.02: 🎉🎉 1 Paper is accepted by TCSVT 2026.

- 2026.02: 🎉🎉 1 Paper is accepted by CVPR 2026 Findings.

- 2025.11: 🎉🎉 3 Papers are accepted by KDD 2026! See you in Jeju!

- 2025.10: 🎉🎉 Get Postgraduate National Scholarship again.

📝 Selected Publications (*=Equal Contribution, †=Conresponding Author)

🛡 Robust Multimodal Learning

🧩 Robust Against Missing Modalities

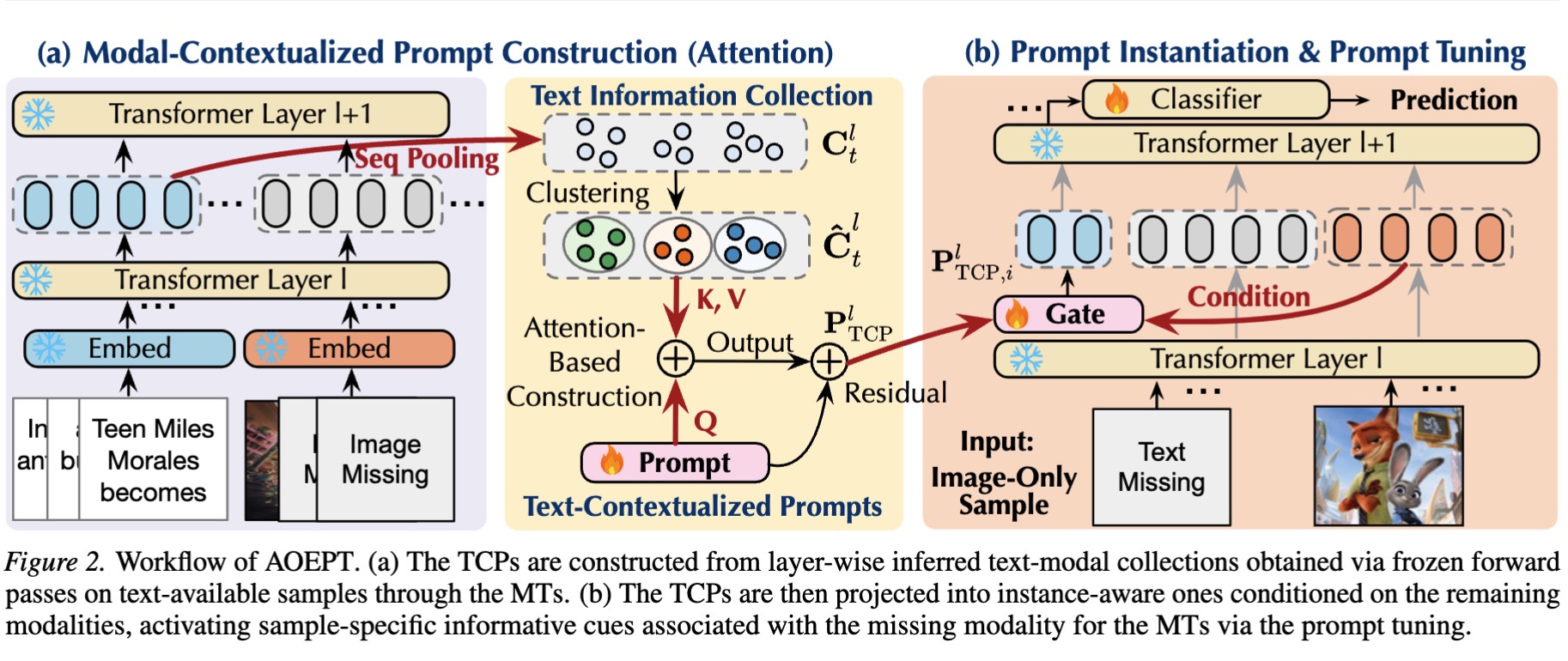

AOEPT: Breaking the Implicit Modality-Reduction Bottleneck in Modality Missing Prompt Tuning

Jian Lang, Rongpei Hong, Ting Zhong, Fan Zhou†

ICML 2026 | CCF A | PDF | Project | Github

- The Implicit Modality-Reduction (IMR) bottleneck in existing modality-missing prompt-tuning methods, and a new metric Normalized Missing-modality Mutual Information (NM2I) quantifies IMR.

- A minimalist Modal-Contextualized Prompting method (AOEPT) breaks IMR.

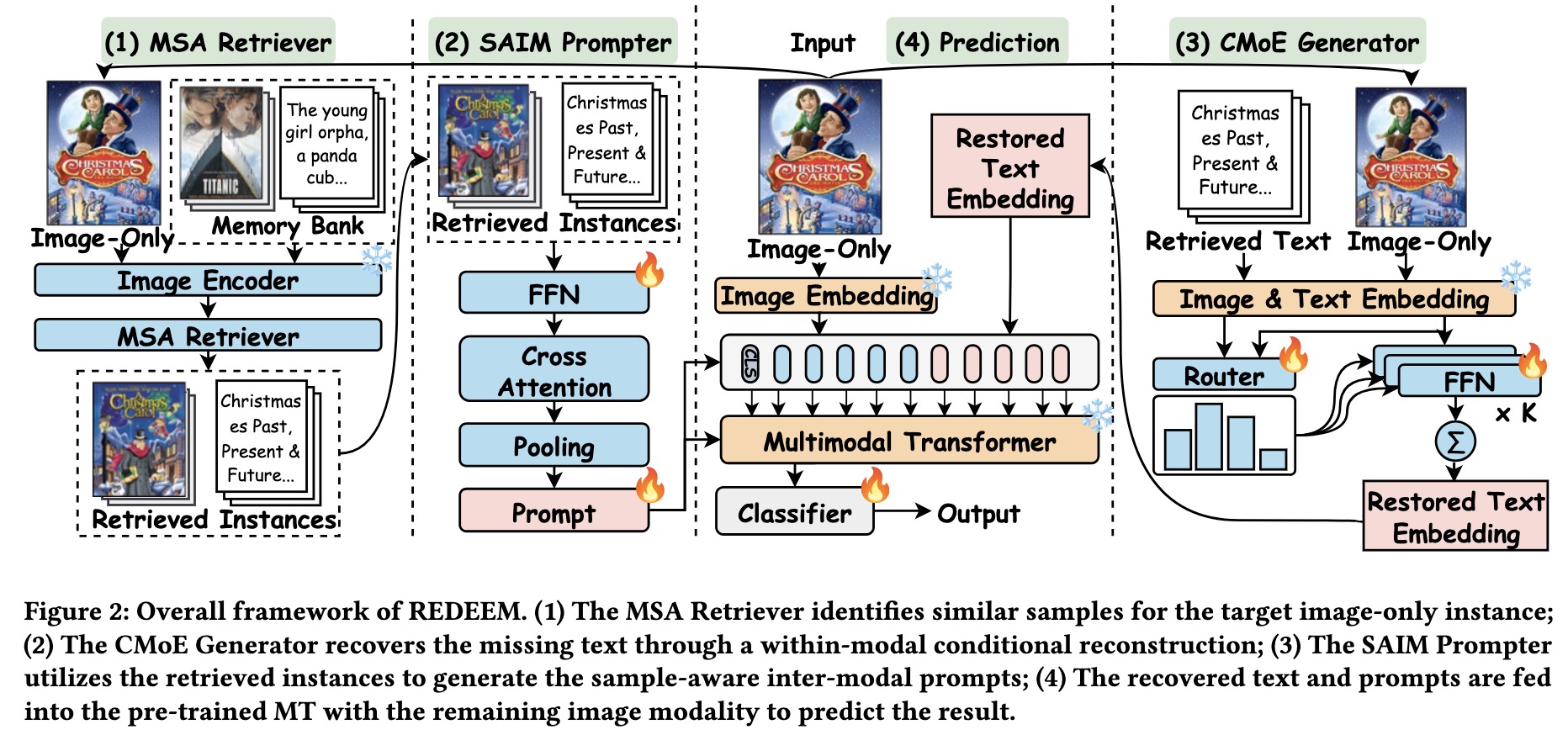

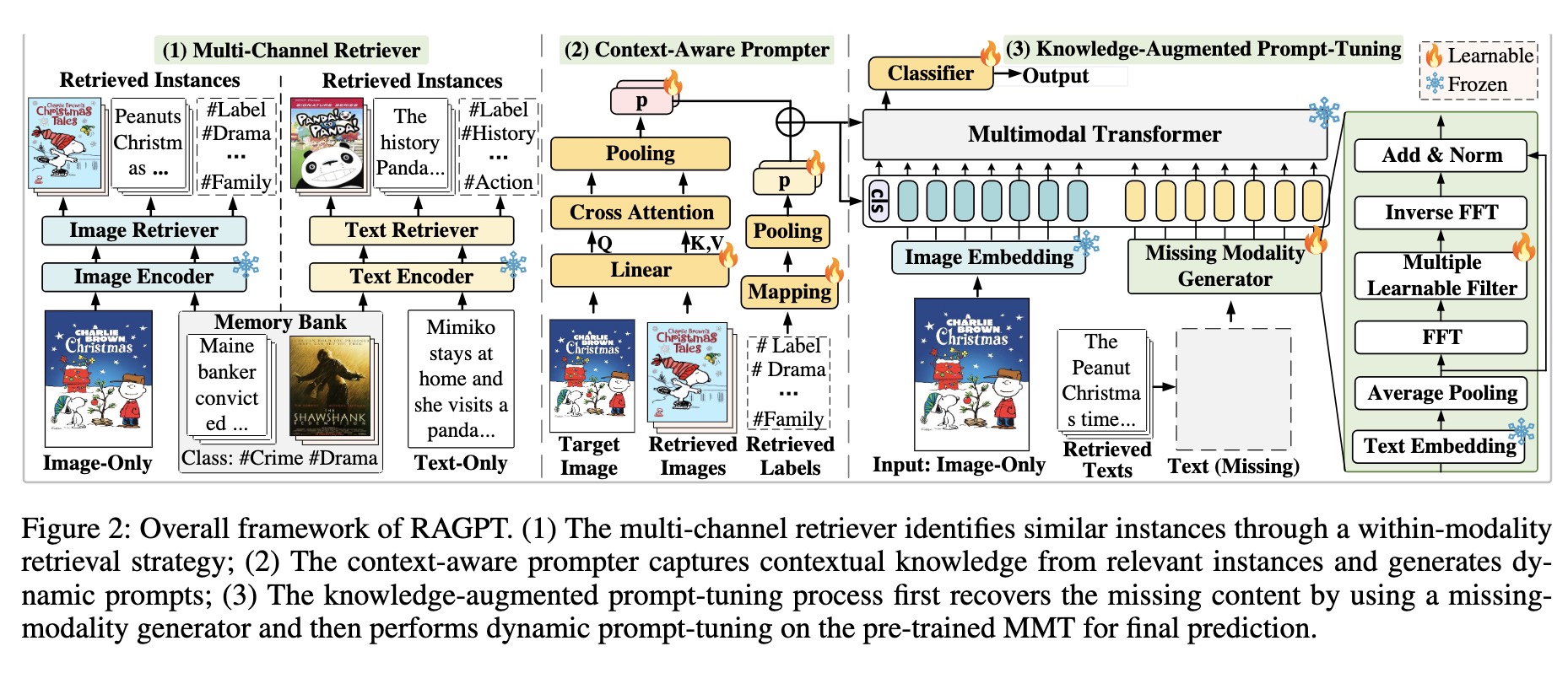

Retrieval-Augmented Dynamic Prompt Tuning for Incomplete Multimodal Learning

Jian Lang*, Zhangtao Cheng*, Ting Zhong, Fan Zhou†

AAAI 2025 | CCF A | PDF | Github |

- RAGPT, a novel retrieval-augmented dynamic prompt-tuning framework for enhancing the modality-missing robustness of pre-trained Multimodal Transformer.

⚓ Robust Against Domain (Distribution) Shift

🪙 Robust Against Data / Label Scarcity

🧑🦱 Personalized Multimodal Learning

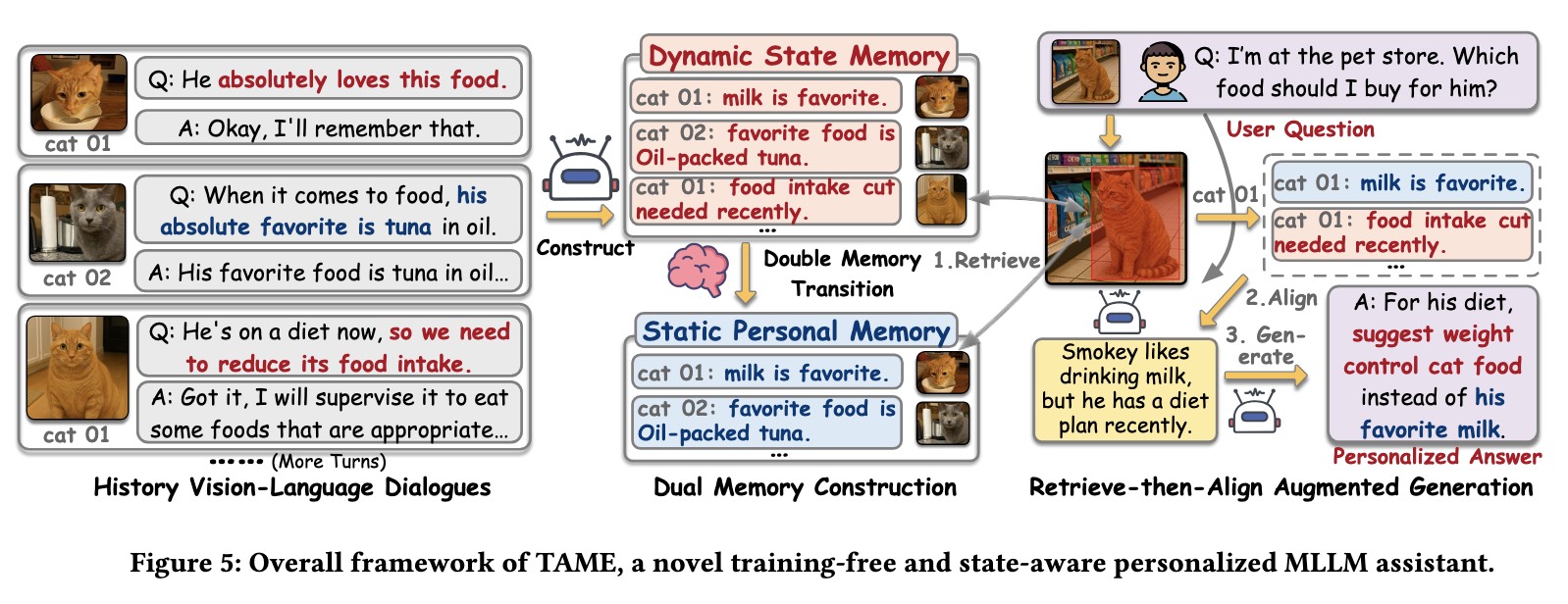

🧏 MLLM Personalized Understanding

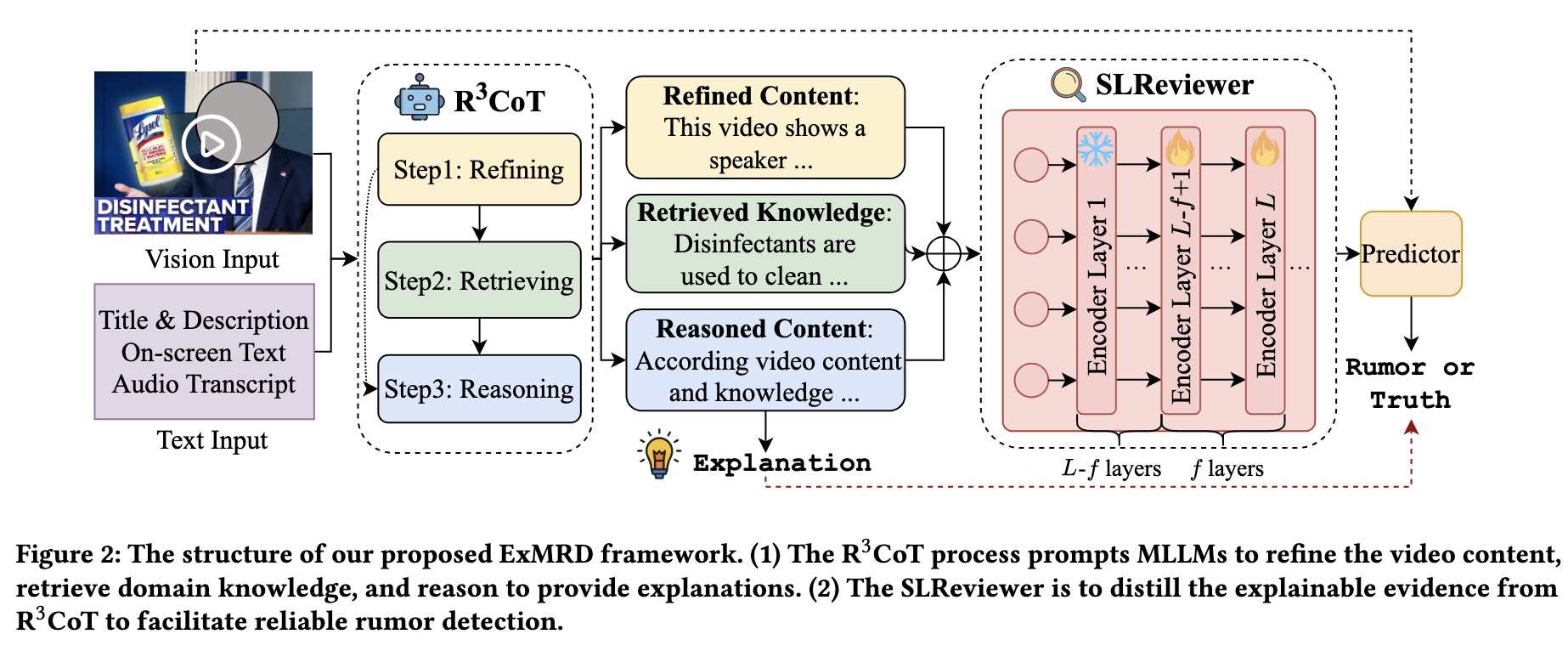

🎥 Video Analysis & Detection

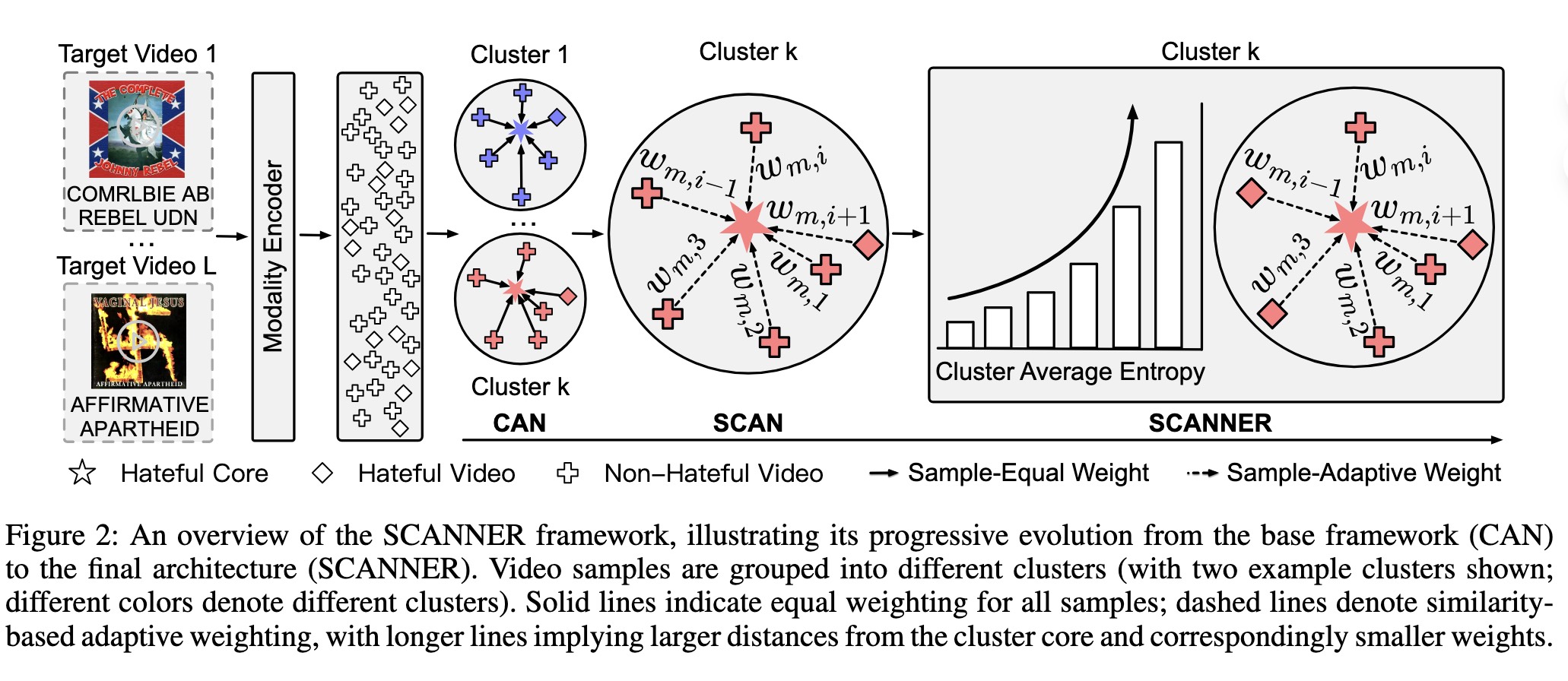

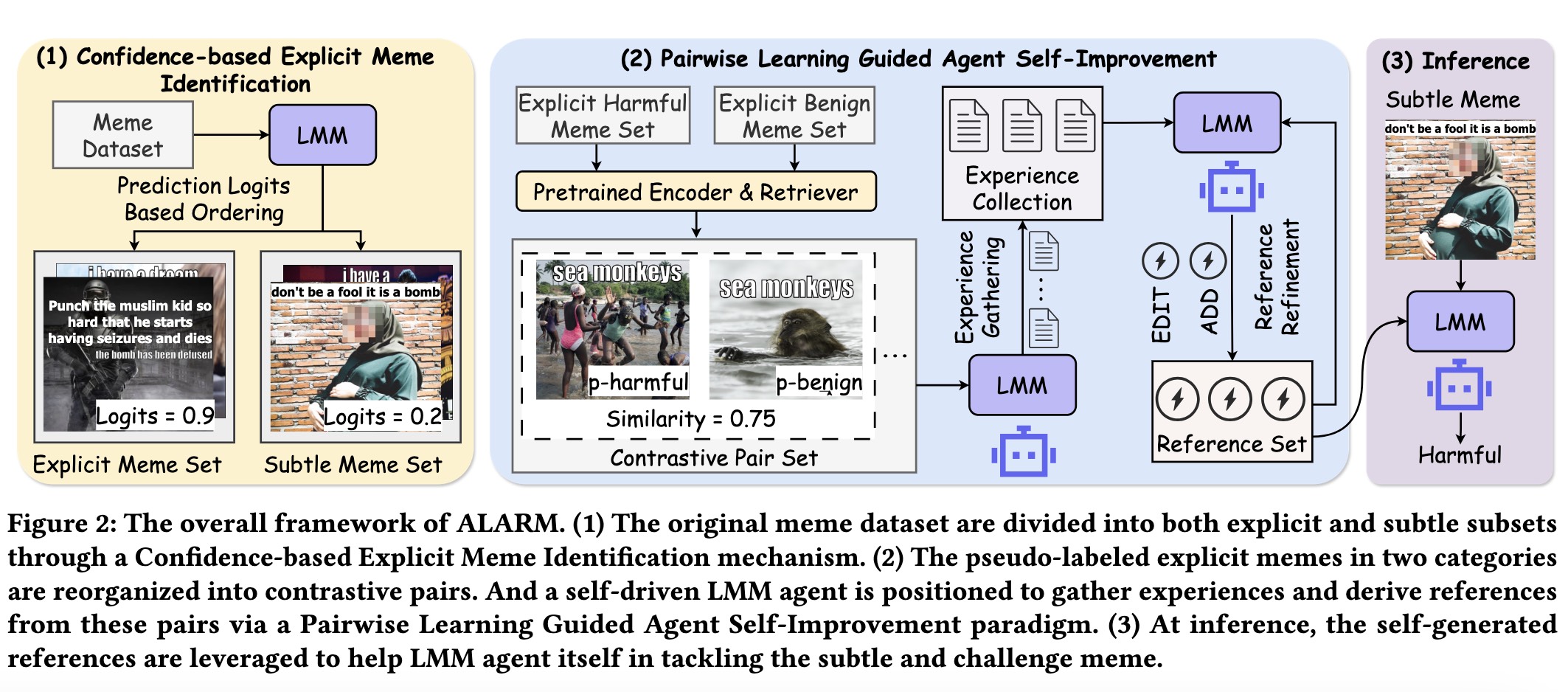

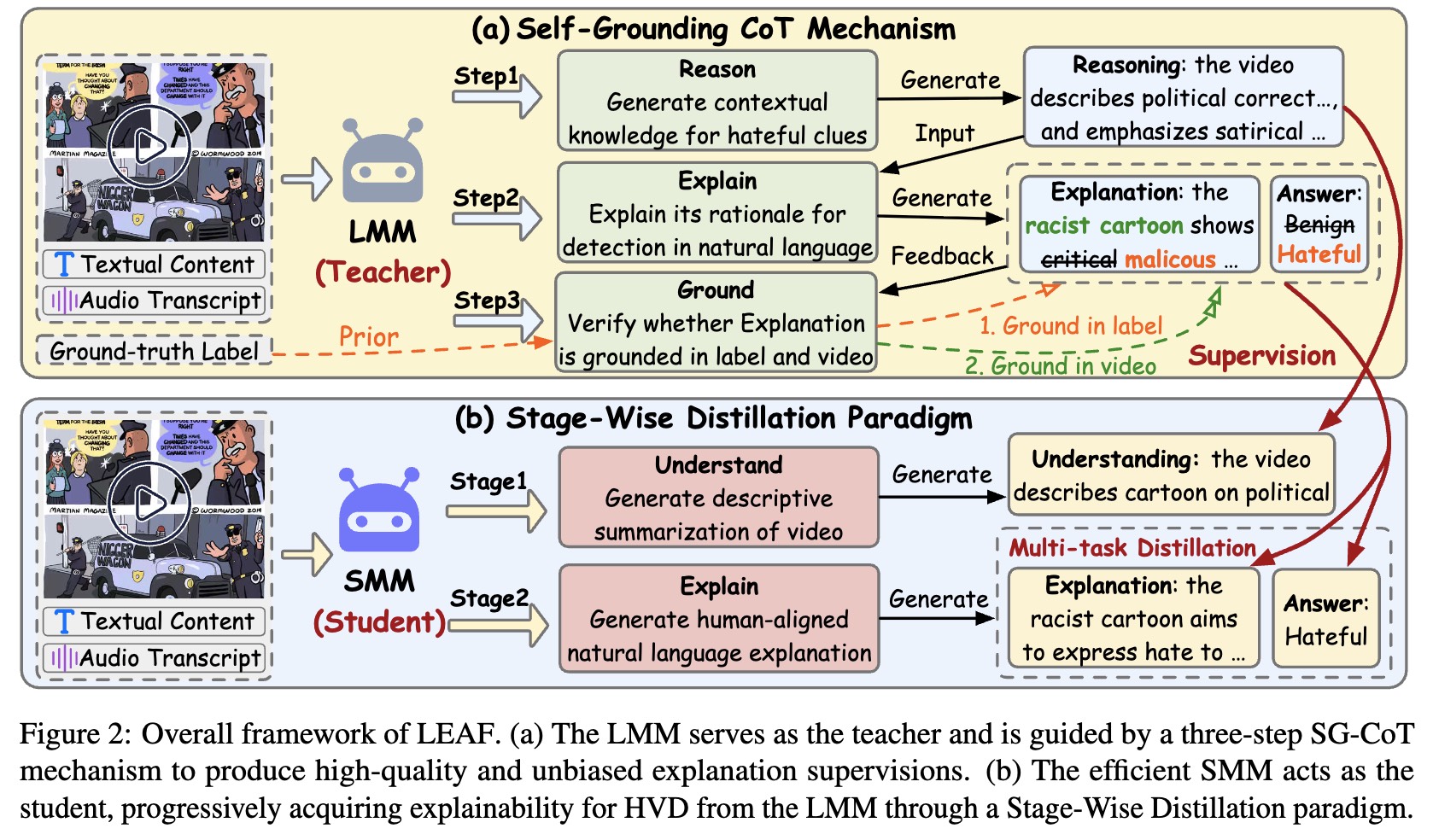

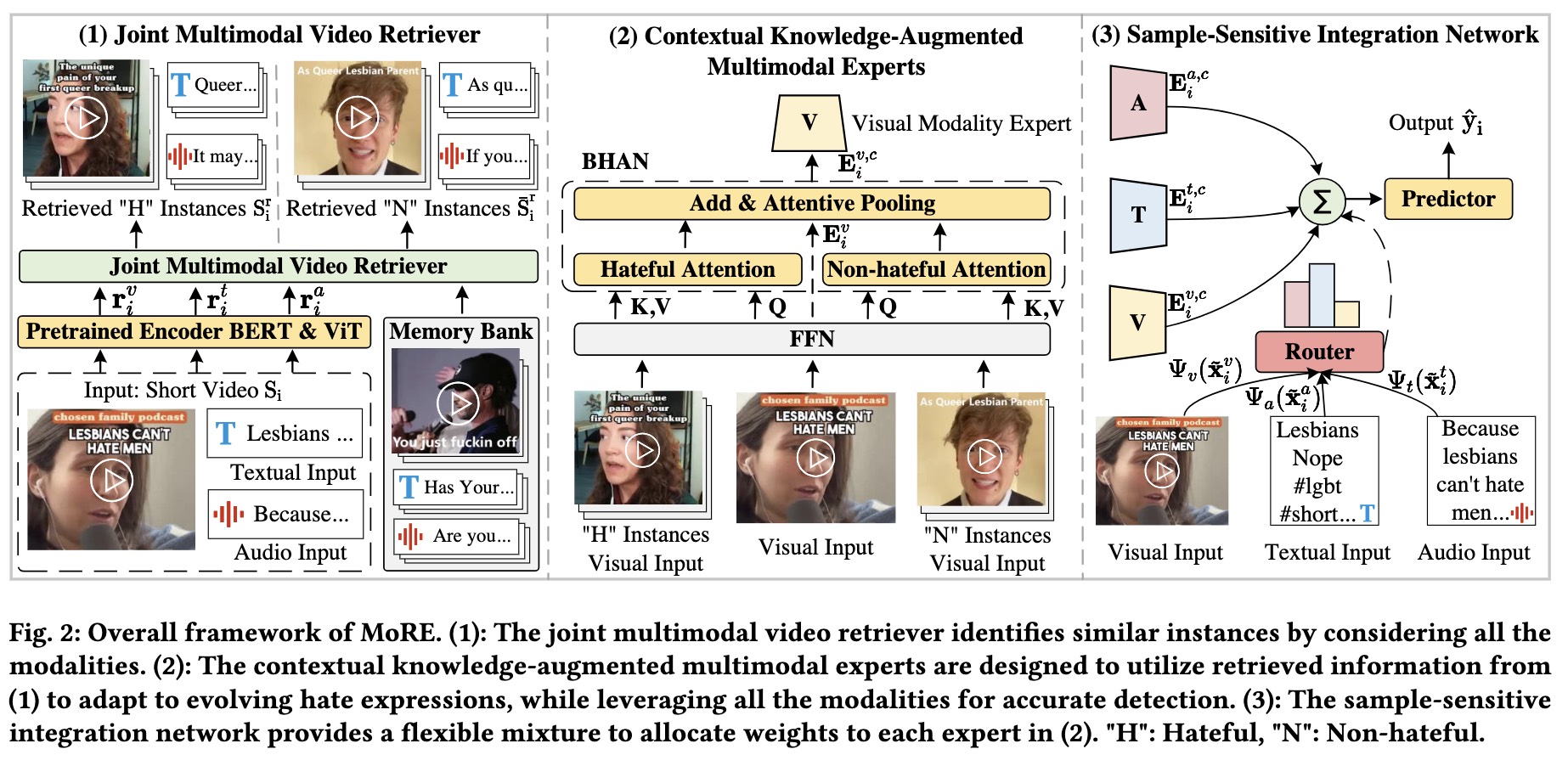

MATCH: Multi-Agentic Evidence Grounding for Explainable Hate Video Detection

Kaiju Li, Rongpei Hong, Jian Lang, Jin Wu†, Fan Zhou†, Jingkuan Song

TCSVT 2026 | CAS Q1 Top | PDF

- MATCH, a novel multiple LMM agent collaboration framework for interpretable hate video detection.

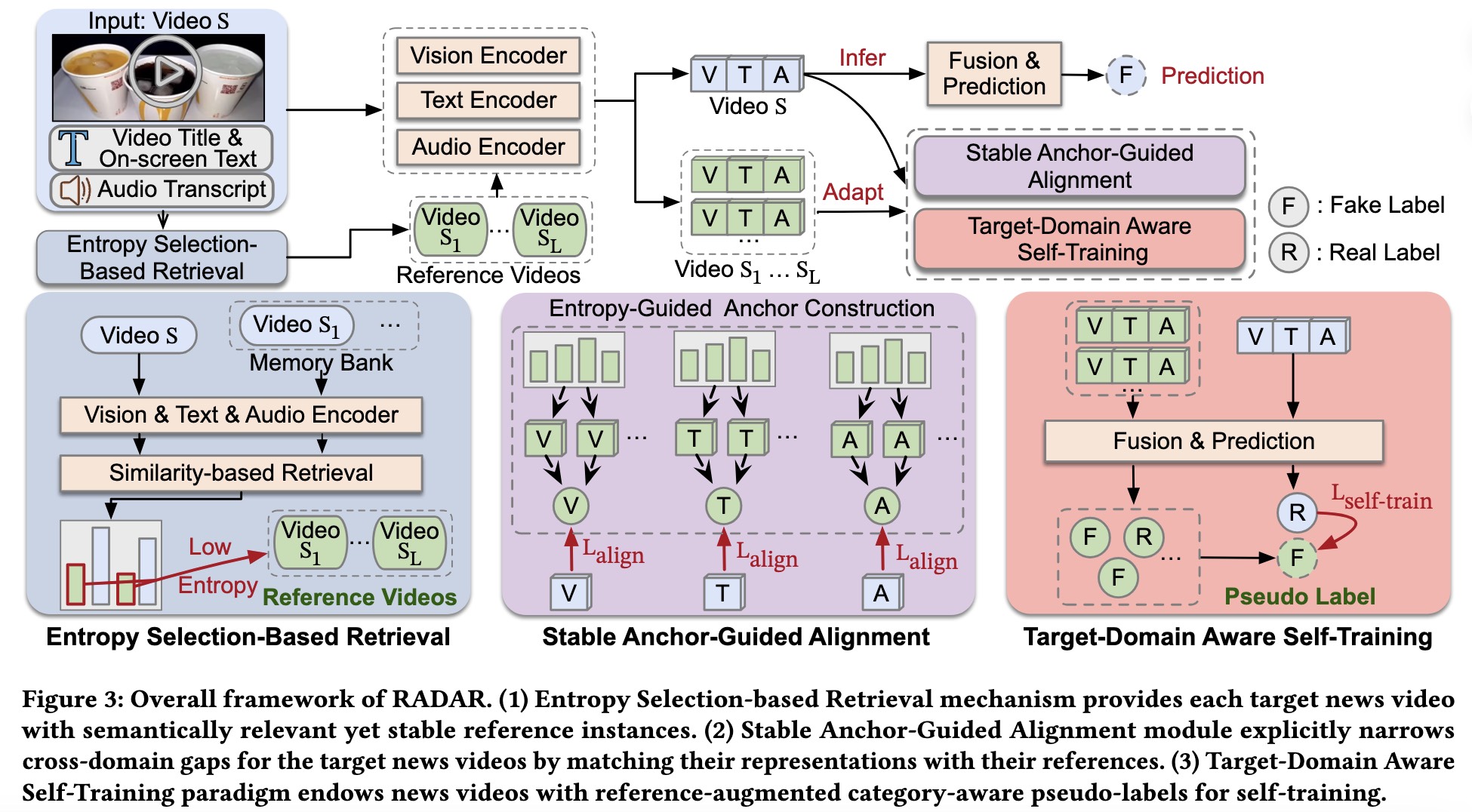

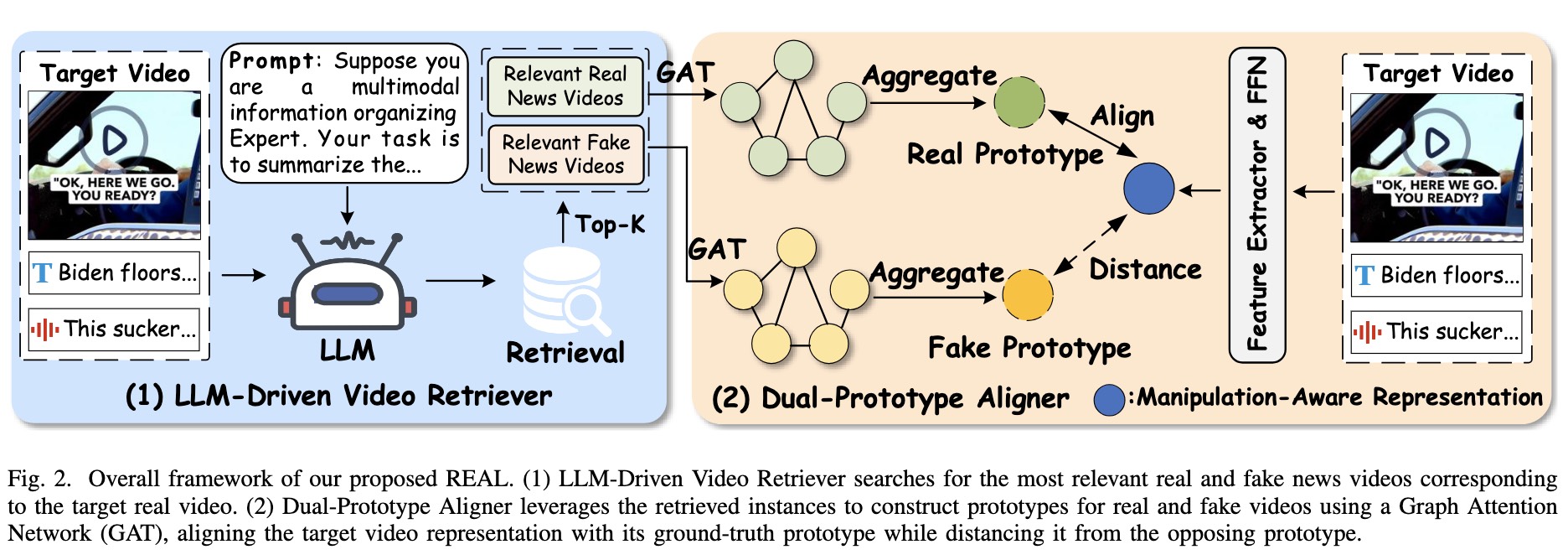

REAL: Retrieval-Augmented Prototype Alignment for Improved Fake News Video Detection

Yili Li, Jian Lang, Rongpei Hong, Qing Chen, Zhangtao Cheng, Jia Chen, Ting Zhong, Fan Zhou†

ICME 2025 | CCF B | PDF | Github |

- REAL, a novel model-agnostic framework that generates manipulation-aware representations to enhance existing methods in detecting fake news videos with only subtle modifications to the original authentic ones.

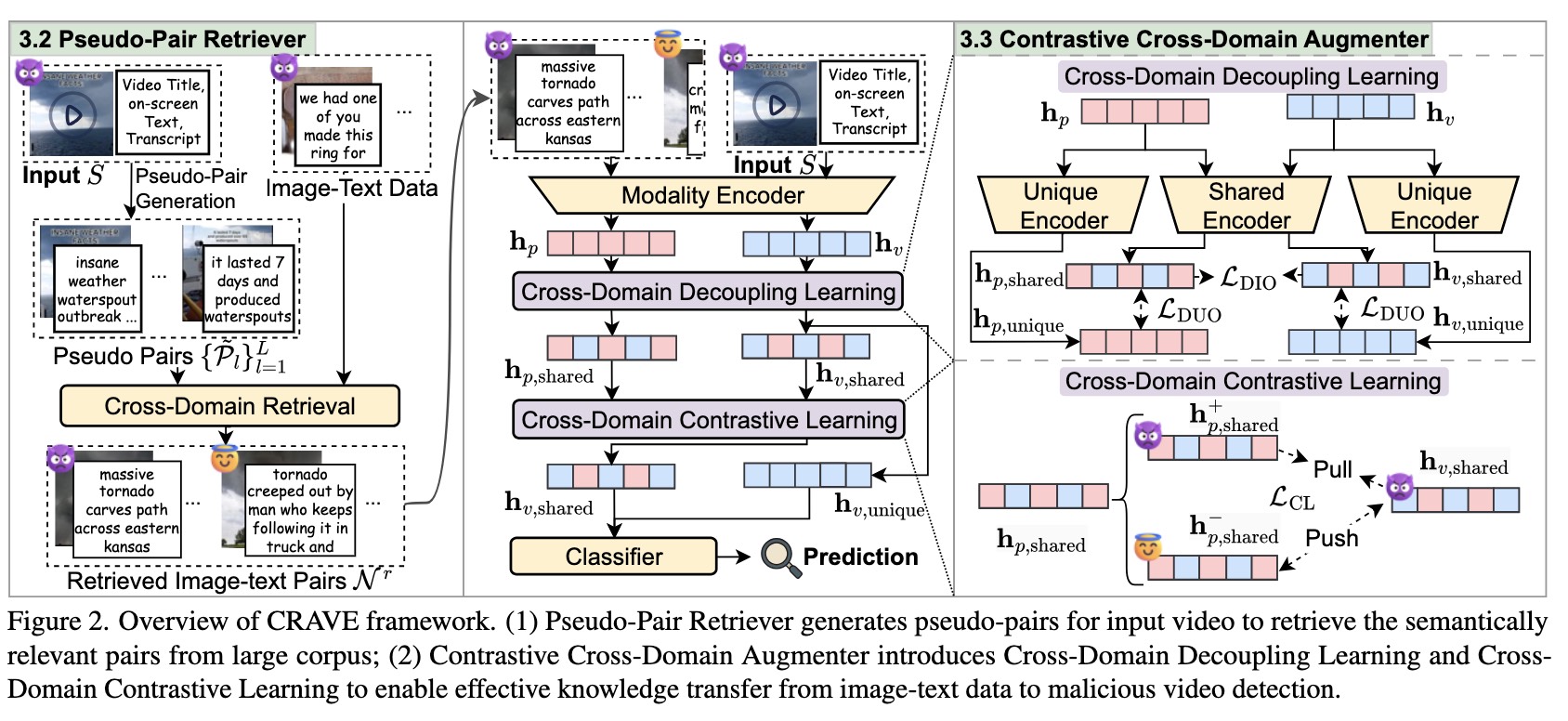

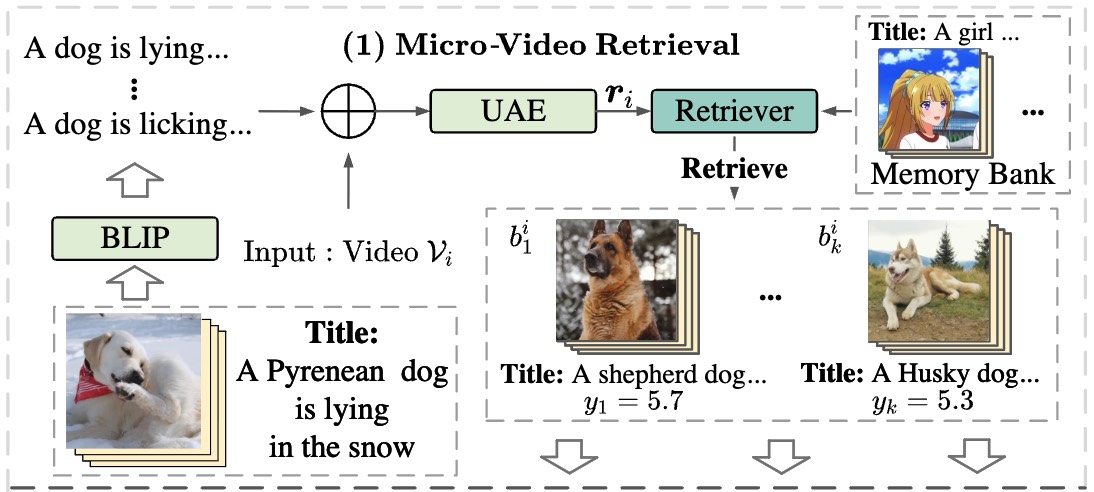

Predicting Micro-video Popularity via Multi-modal Retrieval Augmentation

Ting Zhong, Jian Lang, Yifan Zhang, Zhangtao Cheng, Kunpeng Zhang, Fan Zhou†

SIGIR 2024 Short | PDF | Github |

- MMRA, a multi-modal retrieval-augmented popularity prediction model that enhances prediction accuracy using relevant retrieved information.

🎖 Honors and Awards

- 2025.10 National Scholarship (Top 1%)

- 2025.10 Master’s Student Academic Scholarship (1st Division, Ranked 1st)

- 2024.10 National Scholarship (Top 1%)

- 2024.10 Master’s Student Academic Scholarship (1st Division, Ranked 1st)

- 2023.12 Artificial Intelligence Algorithm Challenge Runner-up (2nd), hosted by People’s Daily Online

📖 Educations

- 2023.09 -, PhD Student, University of Electronic Science and Technology of China

- 2019.09 - 2023.06, Undergraduate, Fuzhou University

📝 Peer Review

- Conference Review: NeurIPS 2026 Reviewer, KDD 2026 Reviewer, ICML 2026 (Emergency) Reviewer, AAAI 2026 Reviewer

- Journal Review: IJCV Reviewer, TPAMI Reviewer, TCSVT Reviewer, KBS Reviewer, ESWA Reviewer

💻 Internships

- 2022.03 - 2022.06, Ruijie Networks, Software Development Intern.